Key Takeaways:

Have you ever wondered why some apps can instantly tell you which celebrity you look like or how security systems unlock a phone just by glancing at your face? Behind every one of these experiences is the same powerful technology: face-matching AI.

In 2026, the demand for AI face recognition app development has surged across industries, from fintech and healthcare to entertainment and e-commerce. Whether you’re building a security verification tool, a fun celebrity look-alike app, or a personalized retail experience, face-matching technology is no longer a luxury reserved for tech giants. It’s accessible, scalable, and increasingly expected.

But here’s where most founders and product teams get stuck: knowing what to build is easy. Knowing how to build a face-matching app that’s fast, accurate, and compliant- that’s an entirely different challenge.

Which ML models should you use?

What features are non-negotiable?

And how much is this actually going to cost?

This guide answers all of it.

We’ll walk you through the core architecture, the machine learning models powering today’s most accurate systems, must-have features, and a realistic cost breakdown, so you can make informed decisions before writing a single line of code.

If you’re serious about bringing an AI-powered product to life, partnering with an experienced artificial intelligence development company will significantly cut down your time-to-market and technical risk.

Let’s start building.

Face-Matching Apps Are Beyond Entertainment

While “Who is your celebrity look-alike?” dominates social media feeds, the core technology,

Biometric pattern matching is a critical pillar in modern custom software development.

1. Insurance Fraud Detection

Insurance providers lose billions annually to “ghost” claims. Face-matching AI validates the

identity of claimants in real-time, matching live selfies against government-issued IDs. By

integrating liveness detection, we prevent “spoofing” attempts using photos or high-

resolution videos of another person.

2. Heritage and Genealogy

Platforms like MyHeritage have proven that users are deeply invested in their roots. AI

models can now compare low-resolution historical photographs against modern databases

to identify lost relatives or track lineage across generations with high statistical confidence.

3. Marketing & Brand Engagement

Brands use face-matching to gamify the user experience. Imagine a campaign where a user

scans their face and is matched with a specific “product persona” or a historical figure related

to the brand story. This level of Generative AI integration transforms passive marketing into

interactive experiences.

Still Unsure How To Turn Face-Matching AI Into A Real Product?

From model selection to launch, the right team can cut months off development and avoid costly mistakes.

Start Your AI App ProjectCelebrity Look-Alike Apps — Technical Case Studies

These four applications reveal distinct architectural choices driven by different performance and scale requirements:

1. StarByFace

Consumer / Viral

Uses a 128-dimensional embedding from a VGGFace-based model against a pre-indexed celebrity database of ~10,000 labeled faces. A cosine similarity threshold of 0.6 yields entertaining rather than forensic results, intentionally lenient to maximize shareable matches.

Model: VGGFace (VGG16) · Distance: Cosine

2. Google Arts & Culture

Museum / Cultural

Matches selfies to fine art portraits using custom CNN embeddings fine-tuned on 70,000+ digitized artworks. Unique challenge: matching live faces to painted, sketched, and sculpted subjects requiring style-invariant features beyond standard face recognition.

Model: Custom CNN · Backend: Google Cloud Vision AI

3. DeepFace Celebrity Finder

Open Source Demo

Open-source implementation using IMDB dataset (~18,000 celebrity images). Generates 2622-dimensional VGG-Face vectors. Full database vectorization takes ~11 minutes on a single CPU core; similarity search returns in seconds. FAISS index improves search speed 40–60× over brute-force.

Model: VGG-Face / FaceNet · Index: FAISS (IVF)

4. Ancestry DNA Stories

Genealogy / Heritage

Matches users to historical family photos using ArcFace embeddings with age-invariance fine-tuning. Handles photos ranging from 1860s daguerreotypes to modern selfies. Privacy architecture: embeddings never stored persistently, computed in-session, compared, then discarded within 24 hours.

Model: ArcFace (ResNet100) · Distance: Euclidean

How Face-Matching AI Actually Works

Most people have no idea what happens in the half-second between their face appearing on a camera and a door unlocking or an account opening. That gap is where all the interesting engineering lives.

The global biometrics market is projected to cross $82 billion by 2027. This pipeline of five steps to make one liveness layer is what sits under most of it.

Here’s what’s actually going on.

Capture — Garbage In, Garbage Out

Everything starts with an image. And the quality of that image determines how well everything else performs.

Before any AI runs, the system checks: is this blurry? Too dark? Is the face partially cut off? A well-built system rejects bad inputs rather than processing them and returning a confident wrong answer. That quality gate is not glamorous, but skipping it causes more production failures than any other single decision.

Detect — Finding the Face

A CNN-based detection model, MTCNN, RetinaFace, or a YOLO variant scans the image and draws a bounding box around every face it finds. Good detectors handle tilted heads, partial shadows, multiple faces, and faces at different distances without breaking a sweat.

What comes out isn’t just a box. It’s also a confidence score and a set of facial landmarks: the coordinates of the eyes, nose tip, and mouth corners. Those five points feed directly into the next step.

Align — Normalizing What Goes In

This step gets skipped in tutorials. It shouldn’t be.

Faces arrive at different angles, sizes, and offsets. Using the landmarks from detection, the system applies a transformation that normalizes every face into the same consistent position: neutral, frontal, same scale. The feature extraction models downstream were trained on aligned faces. Give them unaligned ones and accuracy drops noticeably.

Encode — Turning a Face Into a Number

This is the core of it. The aligned face image goes into a deep neural network; ArcFace and FaceNet are the most widely used and come out as a vector of floating-point numbers. Usually 128 or 512 of them.

That vector is the mathematical identity fingerprint for that face. It’s not a photo. It doesn’t look like anything. But it’s been trained so that different photos of the same person produce vectors that cluster tightly together, while photos of different people land far apart in that space.

This is also why storing the template rather than the original image is the right call, especially in regulated industries like healthcare or fintech, where biometric data storage gets audited. You cannot reconstruct a face from a 512-dimensional float vector. That matters legally.

Match — How Similar Is Similar Enough?

The system computes a distance between the query vector and stored templates, cosine similarity being the most common method, and returns a score. That score means nothing on its own. What matters is the threshold you set.

Too low: you start accepting people who shouldn’t get in.

Too high: you start blocking people who should.

Every deployment tunes this differently depending on the risk profile. A phone unlock can be more lenient. A KYC verification for a financial account cannot.

At scale, this becomes a search problem. Comparing against ten users is trivial. Comparing against 10 million in under a second needs vector search tooling; FAISS and pgvector are the two most commonly used. They index embeddings so the system finds the closest match without scanning the entire database row by row.

Liveness Detection — The Final Layer That Makes It Real

A facial recognition system without liveness detection isn’t a security system. It’s a system that can be fooled with a printed photo.

Passive liveness analyzes texture, micro-movements, and depth cues in the frame to confirm a real human is present — no action required from the user. Active liveness asks for a blink, a head turn, a specific look. More assurance, more friction.

Most production systems, through KYC onboarding, employee access, and patient ID, run passive liveness by default and only trigger an active challenge when confidence is low. Asking every user to perform a gesture routine adds drop-off without adding proportionate security for the majority of legitimate users.

Best ML Models for Face Matching

Model selection drives accuracy ceiling, inference latency, and infrastructure cost. This table consolidates verified benchmark data from LFW (Labeled Faces in the Wild) and internal production metrics:

| Model | LFW Accuracy | Embedding Dim | Backbone | Inference Speed | Best For |

| FaceNet (Google) | 99.63% | 128d / 512d | Inception-ResNet | Fast | Similarity apps, look-alike, verification |

| ArcFace | 99.82% | 512d | ResNet-100 | Medium | High-security, fraud detection, ID verification |

| VGGFace / VGGFace2 | 98.9% | 2622d | VGG-16 | Slow | Celebrity matching, heritage apps |

| DeepFace (Facebook) | 97.35% | 4096d | Custom CNN | Slow | Research, large enterprise with own infra |

| OpenFace | 93.80% | 128d | NN4 (Torch) | Very Fast | On-device / edge, real-time camera apps |

| SFace | 99.56% | 128d | Shufflenet | Very Fast | Mobile apps, low-latency requirements |

| AWS Rekognition | ~99.5% | Managed | Proprietary | Fast (API) | Enterprise, rapid integration, scalable cloud |

| GhostFaceNet | 99.7% | 512d | GhostNet | Very Fast | Real-time mobile, resource-constrained hardware |

Production recommendation: ArcFace + FAISS is the gold standard for enterprise face-matching in 2025. FaceNet remains the best open-source choice for consumer apps where inference cost is a constraint. Avoid VGGFace in commercial products; its 2622-d output creates storage/compute overhead without proportional accuracy gains over ArcFace 512-d.

Human beings achieve 97.53% accuracy on the LFW facial recognition benchmark. ArcFace at 99.82% and FaceNet at 99.63% have both surpassed human-level performance, and the gap keeps widening as training datasets grow. AWS Rekognition can identify up to 100 faces in a single image and search against databases of tens of millions of identities, a scale no human team can come close to replicating.

What that means practically: the accuracy ceiling isn’t the problem anymore. The problem is implementation and how the pipeline is built, what data goes in, how templates are stored, and whether the system holds up across different demographics and real-world conditions.

That’s exactly where many deployments quietly fail, and issues like these can often be better understood and addressed by looking into real-world breakdowns of face recognition app privacy issues and implementation pitfalls in production systems.

Building the Matching Database

The database layer is where most face-matching apps fail at scale. Storing raw images is the wrong approach; you store embeddings, not pixels.

Database Architecture Options

| Solution | Type | Scale | Search Speed | Privacy-Ready | Use Case |

| FAISS (Facebook AI) | In-memory vector index | Up to 1B vectors | ⚡ Sub-millisecond | Yes | Celebrity databases, entertainment apps |

| pgvector (Postgres) | SQL + vector extension | Millions of vectors | ✓ Fast | Yes | Insurance fraud, KYC, mid-scale systems |

| Pinecone | Managed vector DB | Billions of vectors | ⚡ Sub-10ms | Partial | Enterprise search, ancestry platforms |

| Weaviate | Open-source vector DB | 100M+ | ✓ Fast | Yes | Self-hosted enterprise, GDPR jurisdictions |

| AWS Rekognition Collection | Managed cloud API | Millions of faces | ✓ Fast (API latency) | Managed | Rapid integration, no infra management |

Approximate Nearest Neighbor Search (ANN)

Brute-force similarity search compares every query embedding against every stored embedding, viable for databases under ~50,000 faces. Above that threshold, ANN indexing (FAISS IVF, HNSW, or Product Quantization) reduces search time by 40–60× with less than 1% accuracy loss. For an ancestry app with 10 million historical photos or an insurance database with 5 million claimants, ANN is non-negotiable.

Critical implementation note: Never store raw face images in your matching database if you can avoid it. Store only encrypted embeddings plus a non-reversible identifier. This architecture means a database breach exposes floating-point vectors, not reconstructable photographs, a fundamental privacy advantage.

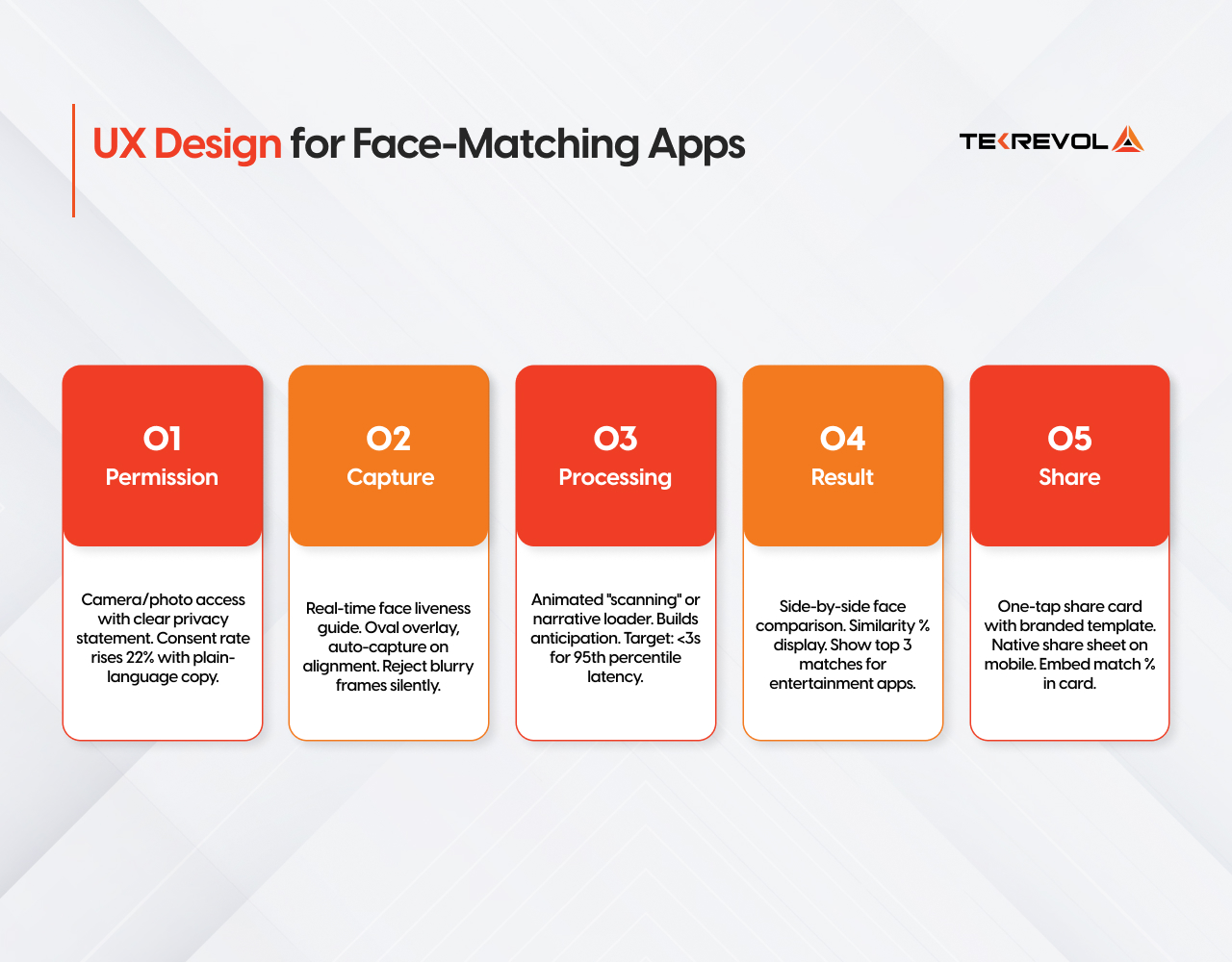

UX Design for Face-Matching Apps

The technical pipeline is only half the product. In entertainment and brand engagement contexts, the user experience around the match result determines virality, session time, and share rate. These are the five screens that matter:

Engagement Mechanics That Drive Retention

Show the percentage, not just the name. A match result like “72% Zendaya” feels far more engaging than a simple label — it sparks curiosity, invites comparison, and naturally gets people talking. Even better, offering secondary matches (like a 3rd or 4th result) adds a fun twist. Apps that show something like “You’re also 64% similar to another celebrity” often see significantly higher share rates because users enjoy the surprise factor and share it with friends.

Small UX details matter too. Instead of instantly showing results, adding a short 2–3 second “analyzing facial structure” animation can actually improve perceived quality, even if the processing is already done. It makes the experience feel more advanced, intentional, and premium.

These kinds of thoughtful engagement patterns are commonly explored in modern on-demand app development services and AI app experiences, especially where user interaction and retention depend on subtle psychological design choices rather than just raw technical accuracy.

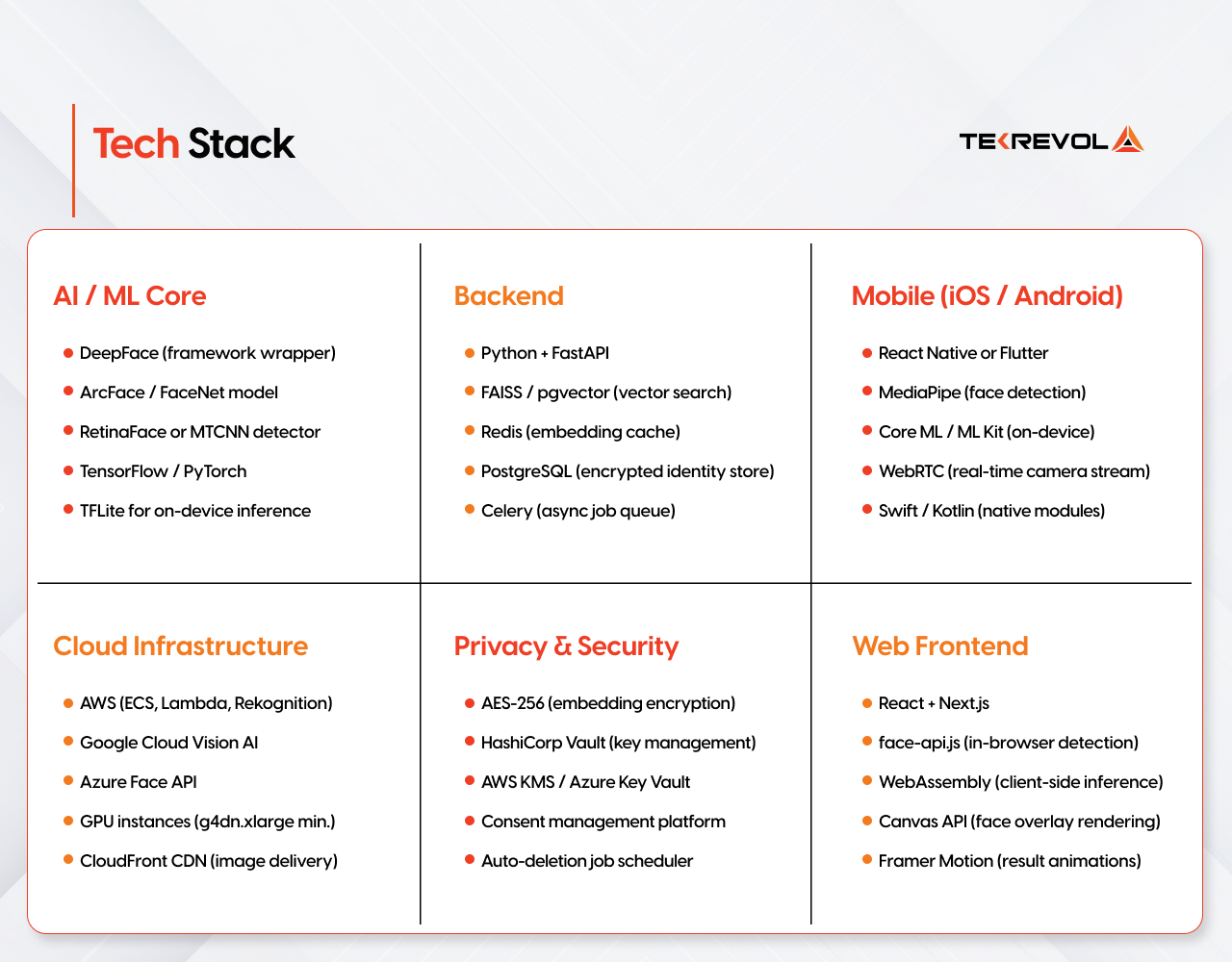

Tech Stack

The optimal stack differs by deployment target and scale. Below is the production-proven configuration TekRevol recommends for full-stack face-matching apps in 2025:

On-Device vs. Cloud Inference

| Factor | On-Device (TFLite / Core ML) | Cloud API (Rekognition / Vision AI) |

| Latency | ~50–200ms | ~300–800ms (network dependent) |

| Privacy | Data never leaves device | Images transmitted to cloud |

| Cost at Scale | Low marginal cost | $0.001–0.004 per face API call |

| Model Quality | Quantized (slight accuracy loss) | Full-precision, continuously updated |

| Database Search | Not feasible for large databases | Scales to millions of faces |

Hybrid architecture is the production answer: on-device for face detection and alignment (privacy-preserving), cloud for embedding search against large celebrity or fraud databases (scale-demanding). This pattern also survives offline gracefully by capturing and queuing, syncing when connected.

You’ve Seen The Tech, Now Imagine It Working Inside Your App

Whether it’s a celebrity look-alike feature or a secure face verification system, we build AI apps that scale, perform, and convert.

Get a Custom Cost EstimateDevelopment Cost

Cost ranges below are based on a US/UK-based custom software development engagement using a dedicated team model. Offshore or nearshore blended rates reduce these figures by 35–55%.

| Build Tier | Scope | Timeline | Cost Range | Best For |

| MVP / Prototype | Pre-trained model (FaceNet/ArcFace), single platform (iOS or Android), basic matching flow, <50K face database | 8–12 weeks | $30,000 – $60,000 | Validation, fundraising demos, brand campaigns |

| Consumer App | Dual platform, custom celebrity/heritage database, share mechanics, basic privacy controls | 4–6 months | $80,000 – $150,000 | Entertainment apps, look-alike products, ancestry features |

| Enterprise Platform | Custom model fine-tuning, fraud detection workflows, GDPR compliance, admin dashboard, high-availability infra | 6–10 months | $150,000 – $350,000 | Insurance fraud, KYC platforms, large ancestry databases |

| Cloud API Integration Only | AWS Rekognition or Azure Face API integration into existing app, basic matching flow, no custom model | 3–6 weeks | $15,000 – $35,000 | Fastest-to-market; adds cloud usage fees long-term |

Ongoing Infrastructure Costs (Monthly)

| Component | Small App (<10K users/day) | Mid-Scale (100K users/day) | Enterprise (1M+ users/day) |

| GPU Compute (inference) | $200–500 | $2,000–5,000 | $15,000–40,000 |

| Vector Database (FAISS/pgvector) | $50–200 | $500–1,500 | $3,000–10,000 |

| Storage (encrypted embeddings) | $20–80 | $200–600 | $1,000–4,000 |

| CDN (result images) | $30–100 | $300–800 | $2,000–6,000 |

| AWS Rekognition API (if used) | $500–2,000 | $5,000–20,000 | Custom contract |

Using pre-trained open-source models (ArcFace, FaceNet) instead of fine-tuning from scratch eliminates 60–70% of model training costs. For entertainment apps, FAISS + a pre-indexed celebrity database of 20,000–50,000 faces performs identically to a custom-trained model at a fraction of the cost.

AI Face App Development Cost Calculator

What type of app are you building?

AI Face App Development Cost Calculator

Q2: What ML approach do you prefer?

AI Face App Development Cost Calculator

Q3: What database scale do you need?

AI Face App Development Cost Calculator

Q4: What features do you require?

Contact Info

Ethics and Privacy in Face-Matching Apps

Biometric data, which face embeddings legally constitute in GDPR, CCPA, and BIPA jurisdictions, carries the highest legal exposure of any data category your product will handle. Non-compliance is not a theoretical risk: regulatory fines under GDPR reach 4% of global annual turnover.

Non-Negotiable Compliance Requirements

Explicit, granular consent:

Consent for face matching must be separate from general terms of service. Users must understand exactly what is processed, where it’s stored, and for how long. Pre-ticked checkboxes are invalid under GDPR Article 7.

Data minimization:

Process and store only what the feature requires. For entertainment apps, this means: compute embedding → perform search → return result → discard embedding. No persistent biometric storage unless the feature explicitly requires it (e.g., “save my result”).

Right to erasure implementation:

A user requesting deletion of their biometric data must trigger deletion of all stored embeddings within 30 days (GDPR Article 17). Implement this as a first-class backend function, not an afterthought.

On-device processing preference:

Wherever technically feasible, process face embeddings on-device (TFLite/Core ML). This eliminates data transmission risk and simplifies GDPR compliance — data that never leaves the device is outside most transmission regulations.

Retention limits with automated enforcement:

Set maximum retention periods and enforce them with automated deletion jobs. Heritage apps can justify longer retention (user explicitly building a family tree) with documented consent; entertainment apps cannot justify retention beyond the session.

Anti-spoofing and liveness detection:

For any security or identity-critical use case (insurance, KYC), implement liveness checks (blink detection, 3D depth maps) to prevent photo replay attacks. This is also a fraud prevention measure, not just a compliance one.

Bias auditing:

Most open-source face datasets are demographically skewed toward white adult faces. Production models must be audited and fine-tuned on diverse datasets before commercial deployment to avoid discriminatory accuracy disparities across skin tones, ages, and genders.

Children’s data (COPPA / GDPR-K):

If your app is accessible to users under 13 (US) or 16 (EU), additional consent mechanisms and data handling restrictions apply. Age-gating or parental consent flows are mandatory

The EU AI Act classifies real-time biometric identification systems in public spaces as “high-risk AI” requiring conformity assessments. Entertainment apps operating in private, user-consented contexts fall under a lower risk tier — but still require documented data protection impact assessments (DPIAs) for any large-scale biometric processing.

How TekRevol Builds AI Vision Apps

TekRevol’s AI development practice delivers face-matching and computer vision applications through a structured five-phase process designed to move from validated concept to production-grade system without rework-heavy iterations:

| Phase | Deliverable | Duration | Key Activities |

| AI Discovery | Technical feasibility + data audit | 1–2 weeks | Model selection, dataset assessment, privacy architecture plan, cost modeling |

| Proof of Concept | Working on the similarity pipeline on sample data | 2–4 weeks | Model integration, ANN index build, threshold calibration, benchmark testing |

| MVP Build | Functional app (iOS/Android/Web) with core match flow | 6–10 weeks | Full-stack dev, camera UX, result display, basic compliance implementation |

| Production Hardening | Scalable, audited, compliant system | 4–8 weeks | Load testing, GDPR compliance review, bias audit, security penetration testing |

| Launch & Iteration | Live product with monitoring & retraining pipeline | Ongoing | Model drift monitoring, A/B test result UX, user feedback loop, dataset expansion |

As an AI development company, we built production computer vision systems across healthcare diagnostics, retail analytics, and consumer entertainment. Our Generative AI development extends face-matching into synthetic image generation for training data augmentation, a critical capability for brands that need to expand celebrity or character databases without expensive photography shoots.

Our mobile development services team specializes in on-device ML deployment (TFLite, Core ML) to deliver sub-200ms inference on consumer hardware.

Build an AI Vision App with TekRevol

Our ML engineers build production-ready face recognition, similarity search, and computer vision systems.

Discuss Your AI Project Now!